In an age where misinformation can travel at the speed of light, the challenge of discerning fact from fabrication has become increasingly complicated. One of the most insidious forms of digital deception is the deepfake—a technology that powers synthetic media, allowing the creation of hyper-realistic images, audio, and videos that can mislead viewers. While these tools have garnered attention for their potential misuse, solutions are emerging to counter their spread. Researchers like Siwei Lyu from the University at Buffalo are at the forefront of this battle, developing innovative platforms such as the DeepFake-o-Meter to help the public navigate this perilous landscape.

Deepfakes exemplify a broader issue of digital misinformation where unverified content proliferates across various platforms, posing significant threats to individuals and the social discourse. The rapid dissemination of misleading media can sway public opinion, disrupt elections, and even endanger lives. As traditional methods of verification often fall short in real-time scenarios, this creates a pressing need for accessible detection tools that anyone can use—not just researchers or professionals.

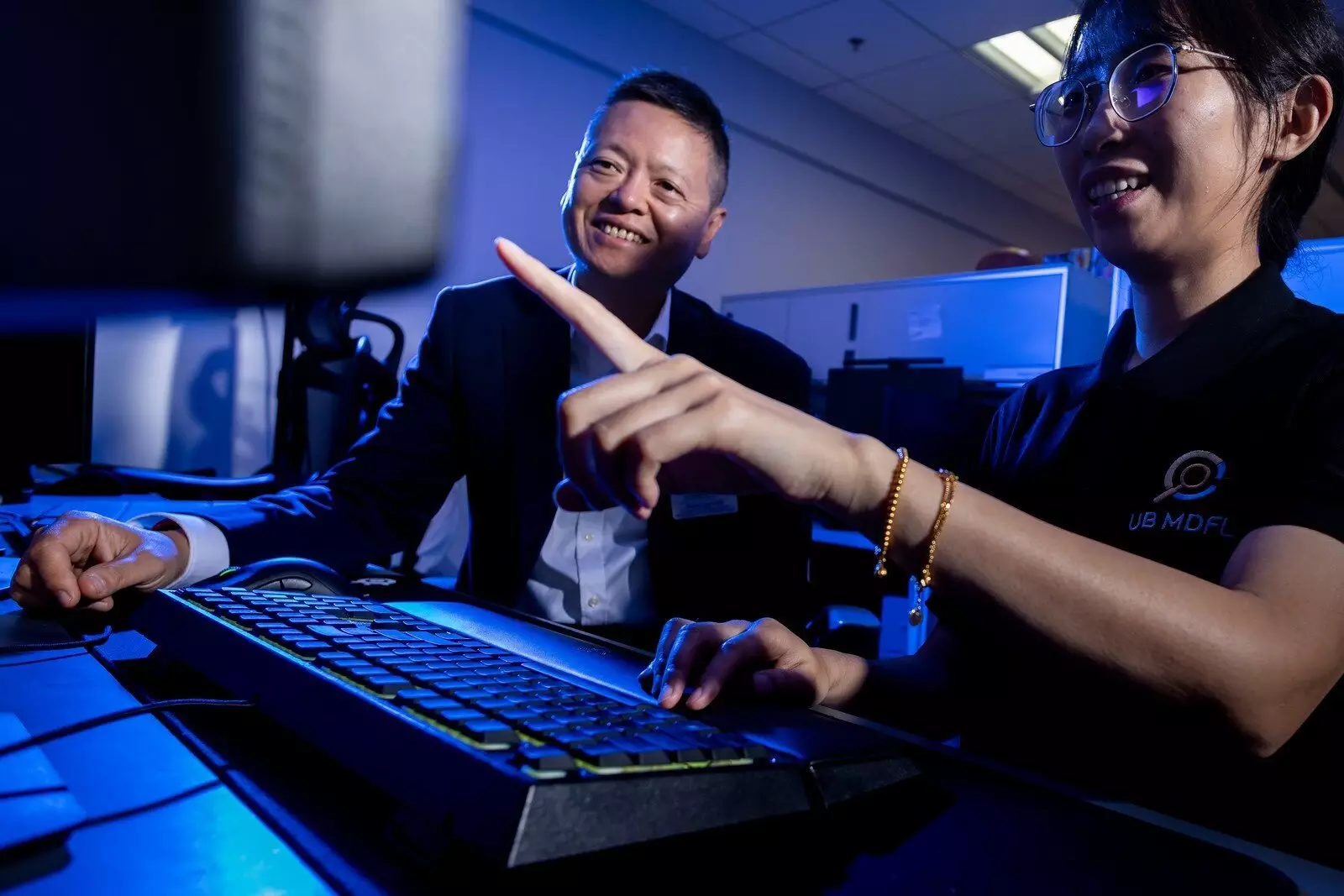

Lyu, a prominent deepfake analyst, highlights this need by illustrating how individuals often find themselves at the mercy of technical experts to assess the credibility of digital media. He remarks that during critical moments, such as breaking news events, the delay in getting a reliable analysis can have dire consequences. To bridge this gap, he and his team have designed the DeepFake-o-Meter, an open-source platform that democratizes access to advanced detection algorithms, empowering everyday users to verify content with ease.

Using the DeepFake-o-Meter is as straightforward as uploading a media file. Users can drag and drop their selected images, videos, or audio clips and choose from various detection algorithms that analyze the content based on diverse metrics such as accuracy and processing time. Instead of offering a simplistic “true or false” answer, the platform provides comprehensive likelihood assessments, enabling users to make informed conclusions about the media in question.

In a remarkable demonstration of efficacy, the DeepFake-o-Meter outperformed several other online tools in analyzing a widely circulated audio clip of a fake Joe Biden robocall. The platform reported a 69.7% likelihood that the audio was AI-generated—a compelling testament to its accuracy. Lyu’s commitment to transparency stands out, as open-source designs allow users to scrutinize the methods behind the results they receive, promoting a more informed public discourse.

A significant aspect of the DeepFake-o-Meter is its aim to connect two essential communities: the general public and the academic research sphere. By allowing users to opt-in to share their uploads with researchers, the platform contributes to the relevant knowledge pool necessary for refining detection algorithms. Nearly 90% of submissions thus far have come from users already suspicious of the authenticity of the content, underscoring a public awareness of deepfake risks.

Lyu emphasizes that to remain effective, the algorithms must continuously evolve alongside the increasingly sophisticated deepfakes. Employing real-world data plays a crucial role in this process; it’s essential for developers of detection tools to understand the context and characteristics of current deepfake trends. This dynamic exchange of information can provide key insights to enhance future detection methods, making the tool not just a static solution but an evolving, responsive defense against misinformation.

While the immediate goal of the DeepFake-o-Meter is to identify AI-generated content, Lyu envisions a more ambitious future. One potential advancement includes identifying the specific tools used to create deepfakes, which could help trace back to their origins and discern malicious intent. This capability would ideally serve not only as a detection mechanism but as a part of a broader strategy against digital misinformation.

However, Lyu warns that reliance should not fall solely on technology. Human judgment remains vital in assessing context and interpreting nuances that algorithms might miss. Ensuring a symbiotic relationship between human cognition and machine learning will enhance the overall effectiveness of misinformation detection efforts.

Ultimately, Lyu’s aspiration for the DeepFake-o-Meter transcends its utility as a detection tool; he hopes it will foster a collaborative online community. Think of it as a vibrant marketplace where individuals interested in combating misinformation can exchange ideas, develop strategies, and support one another in identifying deceitful content. By creating a network of informed users, the platform can encourage diversity of thought and collective vigilance, vital components in the ongoing fight against deepfakes.

The DeepFake-o-Meter represents a significant stride toward democratizing the resources necessary to combat misinformation. As digital tools continue to advance, the synergy between technology and user engagement may also become the key to navigating and mitigating the darker corners of our digital landscape.